“Based on a True Story”

Join us for this short and boring novel of team work, excellence and success.

The Team

It all started on a Thursday morning. The team was happy: an essential refactoring went live the day before, and everything was running smoothly. Like a refactoring should be, internals of the system had been changed, but no external impact was to be expected.

The two Backend Engineers that joined the team recently, Alice and Bob, were quickly grabbing a good hold on the several services they were responsible for, together with the team’s most experienced engineer, Charlie, who was out on vacation. But there was nothing to worry about. Life was good: development was simply continuing as planned.

First contact

A few hours later, one of the team’s stakeholders noticed something weird: there was more information to deal with in the system’s UI than what usually happens. It was not critical though. The amount of information was not that high, just above normal – but it was definitely weird and unexpected. Alice and Bob investigated but couldn’t find anything suspicious, so they decided to to wait and observe a bit more.

In the meanwhile, the product manager, Dave, was also uneasy with the situation and was checking some numbers, trying to understand the situation further. More data was still necessary though, and for that, more time was needed.

Getting Serious

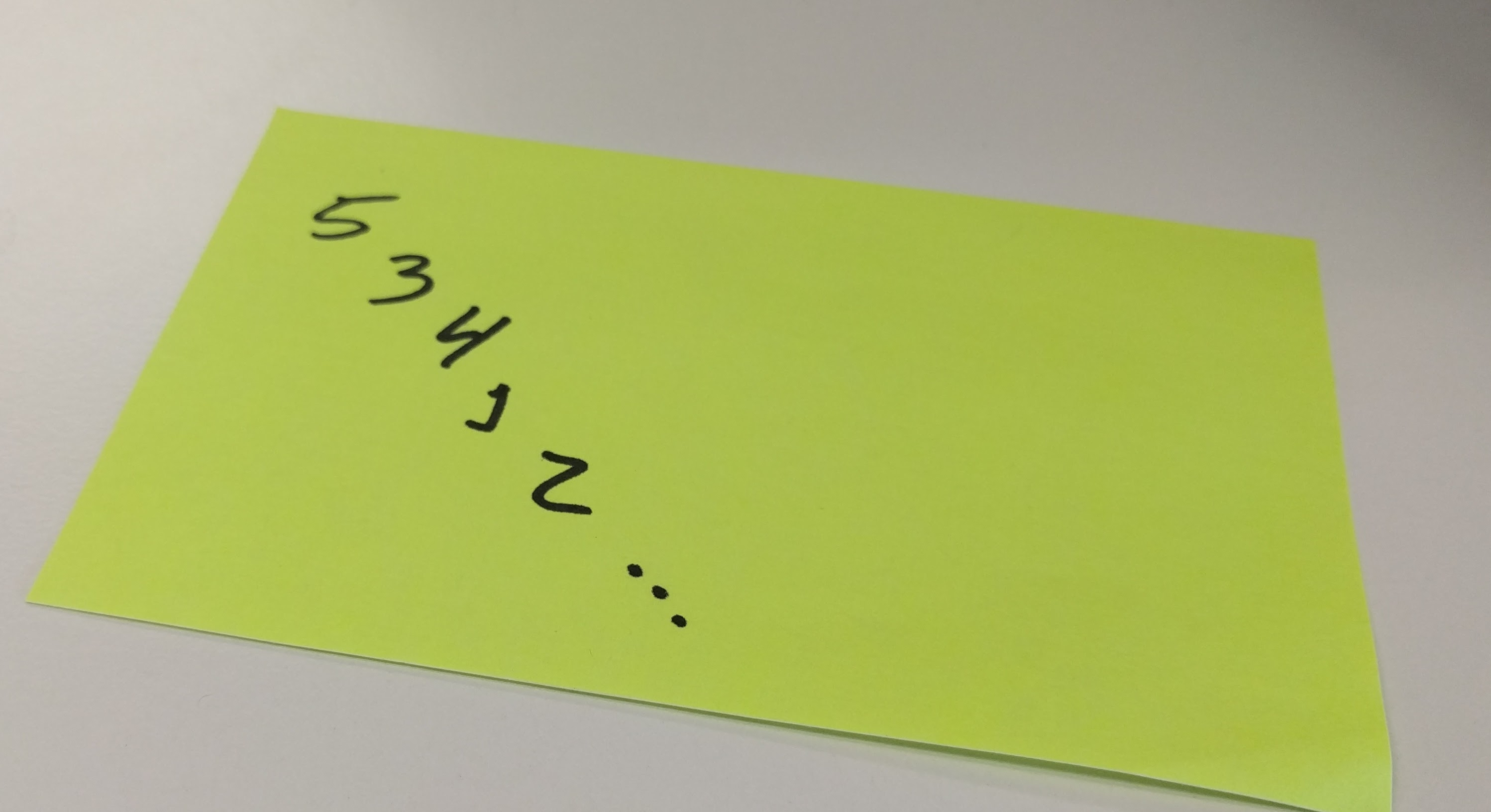

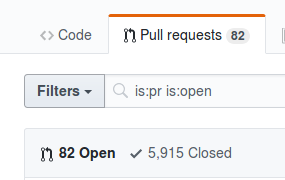

Unfortunately, by the end of the day it was clear that the situation was getting worse. The amount of data kept accumulating, more and more. Something was definitely up, and it was more than just “weird”: it was serious and would cause problems if not fixed.

But like mentioned before, nothing suspicious had been found yet. At this point, given the events were getting serious, the team decided to stop everything and focus on the investigation. It was time to sort this out, and the team was determined to do so.

The Rollback

One suspicion that kept bothering everyone was the refactoring done the day before. It was too close to the incident to be a coincidence. At the same time, no direct correlation could be found in the data, nor in the timelines of the events. There was no proof that the refactoring caused any problems, but also no proof that it did not.

The investigation was moving a bit slowly though, so the team opted for rolling back last day’s changes anyway and call it a day. Investigation would continue on the next day, when everyone would be rested and ready for a fresh start, with a clean mind.

Deep Diving

With data accumulated during the night after the rollback, the team now had at least one confirmation: the refactoring did not cause the problems. The excessive data was still accumulating, even though the old code was now running.

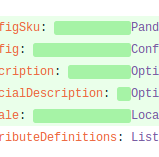

The fresh set of minds showed results though. Some traces were finally found in the logs, pointing to where in the chain of services the problem could be. A careful analysis, nicely pointed by Dave, exposed where in between two systems the data seemed to be fanning out. With the possible culprit in mind, the team knew which service to dig into, even though it was not clear yet what they were looking for.

Looking for the truth

Alice and Bob promptly analysed both the source code and logs of the service. Everything seemed fine in general, but the traces found in the logs before were still repeating, and started to make more sense. It seemed like, at a place where half of the data received should be halted, everything was going through. This hypothesis matched the data collected by Dave. The team was getting closer and Alice and Bob were quite relieved… and curious.

Now, what could be causing that behaviour? It was definitely not in the the service itself, or at least no evidence of that could be found. Further investigation pointed to a different service that offered the information used to halt the data. And after more digging finally the answer: the downstream service was always replying with go ahead signals.

Just kick it with your boots

At the end of the day, the team responsible for the downstream service mitigated the problem with a classical manoeuvrer: by restarting it. This didn’t stop our heroes, Alice and Bob, to keep looking into the problem. They were really curious to figure out what was behind all the trouble they just went through.

Alice and Bob went to the source code of the downstream system and checked it for problems. It took them sometime, since they were not familiar with it, but they found out the exact problematic line and communicated that to the downstream team.

No, not again please

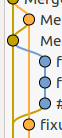

Crisis averted, time for some due diligence. The first thing was to write a Post Mortem, to ensure everything was investigated and documented around the problem and with that avoiding it from happening again in the future. Alice and Bob took care of this quite fast: during the whole time the incident was on going they were actually already documenting facts and a timeline of the events. From that to a full Post Mortem was a quite easy step.

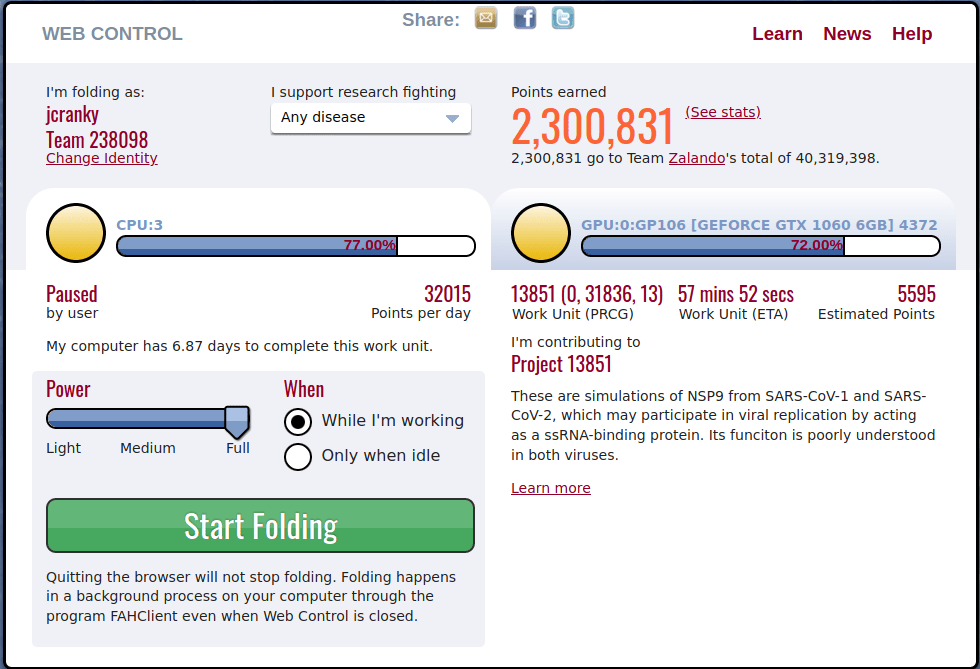

In the end, the team now had a good idea of what to do to avoid such cases again in the future. Other then actually getting the root cause fixed, they also identified that the notification of the problem was broken: instead of being made aware of the issue by stakeholders, they would feel much better if there was some alert firing. This would allow the team to act in a more pro-active fashion.

Rollback the rollback

With everything under control again, and a plan to keep it like that, it was time to go back to business. There were a few things to be kept in mind. They would have to re-deploy the refactoring from the beginning of this tale. They would also have to work on action items from the Post Mortem. Finally, feature development should resume.

The whole team knew what to do. Together, they planned how to act on all of the things mentioned above and, in a few days, everything was back on track. The incident handling was a complete success, from beginning to end, and they were ready for the next one. They were just curious to see what Charlie would have to say about all of that when he got back from vacation.

Intuition failure

The story is not only composed of our heroes and people that got directly involved or affected. Like a true team of teams, everyone wanted to help, one way or another. Another one of the team’s stakeholders came up with an idea: how about having specific days where deployments would happen, like Mondays, so that the team would have more time to act on eventual problems?

While this kind of idea is quite common and pops up back and again in the industry, the team knew that it was not the right thing to do. They politely thanked and rejected it. In the end of the day, they knew they would be ready if anything happened at anytime. On top of that, this incident was not even caused by a deployment in the first place.

We are excellent, we move forward!

Another, perhaps non intuitive, fact was that the team got out of this stronger than before. They were more confident they could handle problems even when Charlie was not around. They knew they could navigate through all the team’s services, even though they weren’t part of writing most of those systems. And they knew that, if necessary, they would receive the support needed to investigate issues and fix root causes, without pressure.

When anything happens in our lives, and it is something negative, we can feel a bit down. In a work life, this could be an incident, that will then impact other people. Maybe even cause monetary loss. But if we look at such events through positive lenses, there is always a bright side. They are always opportunities to learn and to improve, and to get out of it stronger.

I am myself quite curious to see what awaits our heroes in their journey in the future. What about you?